New GPU for Tesla V100 32GB Tesla V100 16GB Tesla V100 PCle Tesla V100 SXM2 Graphic Card Deep Learning High-performance Computing GPU

Below is a comparison table for the Tesla V100 32GB, Tesla V100 16GB, Tesla V100 PCIe, and Tesla V100 SXM2 graphics cards. These cards are designed for high-performance computing, AI, and machine learning workloads, based on NVIDIA’s Volta architecture.

| Feature | Tesla V100 32GB | Tesla V100 16GB | Tesla V100 PCIe | Tesla V100 SXM2 |

|---|---|---|---|---|

| GPU Architecture | Volta | Volta | Volta | Volta |

| Form Factor | SXM2 | SXM2 | PCIe | SXM2 |

| Memory Size | 32 GB HBM2 | 16 GB HBM2 | 16 GB HBM2 | 16 GB HBM2 |

| Memory Bandwidth | 900 GB/s | 900 GB/s | 900 GB/s | 900 GB/s |

| FP32 Performance | 15.7 TFLOPS | 15.7 TFLOPS | 14 TFLOPS | 15.7 TFLOPS |

| FP64 Performance | 7.8 TFLOPS | 7.8 TFLOPS | 7 TFLOPS | 7.8 TFLOPS |

| FP16 Performance | 125 TFLOPS | 125 TFLOPS | 112 TFLOPS | 125 TFLOPS |

| INT8 Performance | 62 TOPS | 62 TOPS | 56 TOPS | 62 TOPS |

| Tensor Cores | 2nd Gen Tensor Cores | 2nd Gen Tensor Cores | 2nd Gen Tensor Cores | 2nd Gen Tensor Cores |

| NVLink Support | Yes (300 GB/s) | Yes (300 GB/s) | No | Yes (300 GB/s) |

| PCIe Interface | N/A | N/A | PCIe 3.0 x16 | N/A |

| Power Consumption | 300W | 300W | 250W | 300W |

| Cooling Solution | Passive (requires system cooling) | Passive (requires system cooling) | Active (fan-cooled) | Passive (requires system cooling) |

| Use Case | Data centers, AI, HPC | Data centers, AI, HPC | Workstations, AI, HPC | Data centers, AI, HPC |

| Multi-GPU Scaling | Excellent (via NVLink) | Excellent (via NVLink) | Limited (no NVLink support) | Excellent (via NVLink) |

| Release Date | 2018 | 2017 | 2017 | 2017 |

| Price (Approx.) | Higher (due to double memory) | High | Moderate | High |

Tesla V100 32GB: Unmatched Memory for Large-Scale Workloads

- Memory: Equipped with 32GB of HBM2 memory, the Tesla V100 32GB delivers 900GB/s of memory bandwidth, making it ideal for memory-intensive AI and HPC applications.

- Performance: Features 7.8 TFLOPS of double-precision (FP64) and 125 TFLOPS of deep learning performance with Tensor Cores.

- Use Cases: Perfect for large-scale AI training, scientific simulations, and data analytics that require massive memory capacity.

Tesla V100 16GB: High-Performance Computing and AI Acceleration

- Memory: Comes with 16GB of HBM2 memory and 900GB/s of memory bandwidth, providing excellent performance for a wide range of workloads.

- Performance: Delivers 7.8 TFLOPS of FP64 performance and 125 TFLOPS of deep learning performance with Tensor Cores.

- Use Cases: Ideal for AI training, inference, and HPC tasks in data centers and research labs.

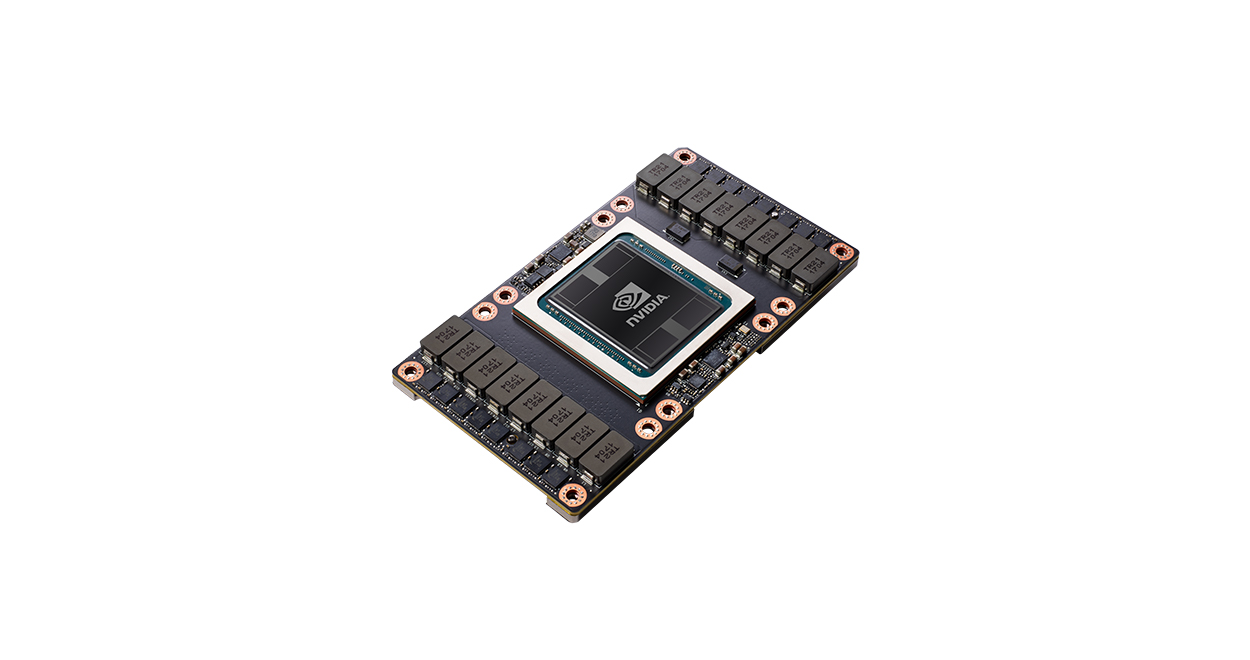

Tesla V100 PCIe: Flexible and Scalable for Diverse Workloads

- Form Factor: Designed in a PCIe form factor, the Tesla V100 PCIe is easy to integrate into existing servers and workstations, offering flexibility for data centers and enterprise environments.

- Cooling: Features a dual-slot, active cooling design for efficient thermal management.

- Power Efficiency: With a maximum power consumption of 250W, it balances performance and energy efficiency.

- Use Cases: Suitable for AI development, HPC, data analytics, and cloud computing.

Tesla V100 SXM2: Optimized for Data Center Performance

- Form Factor: The SXM2 version is designed for NVIDIA DGX systems and other data center environments, offering optimized performance and scalability.

- Cooling: Utilizes a passive cooling design, relying on system-level cooling solutions for efficient thermal management.

- Performance: Delivers the same 7.8 TFLOPS of FP64 performance and 125 TFLOPS of deep learning performance as other V100 variants.

- Use Cases: Ideal for large-scale AI training, HPC clusters, and data center deployments.

Reviews

There are no reviews yet.